COURSE EVALUATIONS

Using Natural Language Processing to gain additional insights from Course Comments

Scroll Down

WARNING

Course Evaluations are easily biased

Before going farther, I have to acknowledge that course evaluations are easily biased.

Things that influence course rating include

-

What grade the student got (Felton et al. 2004)

-

the perceived race of the professor (Reid 2010)

-

How attractive the professor is (Rosen 2017)

-

Whether cookies were available during class (Hessler et al. 2018)

-

The weather the day the student completed the evaluation (Braga et al. 2011)

Additionally, all data I am going to show is aggregated at the course level before comparison, so lots of averages, just a limitation of how I got the data

Given that, What can we learn from course evaluations?

I collected 1300+ course evaluaiotns

-

Spread out over the last 5 years across 8 different departments at Northwestern University

-

includes over 28,000 unique course comments

-

Ratings include:

-

Overall rating of course

-

Overall rating of instruction

-

How much [the student] learned

-

the reason for taking the course

-

interest in the course at the start

-

Some things made sense

-

As the instructor rating improved, so did the course rating (r-squared = 0.74)

-

As the amount the student learned increases, so does course rating (r-squared = 0.64)

-

Same with student interest going into the course (r-squared = 0.29)

Very Low

Very High

Surprisingly, Course requirement does not affect interest

Everyone likes to complain about be required to take a course, however the data does not support this, it doesn't really matter (r2 = 0.01)

Note that the points are aggregated at the course level, so it is the percent of students who were required to take the class on the x axis

What about the comments?

We can use Natural Language Processing (NLP) to algorithmically analyze course comments and to try to gain additional insights in how to improve our teaching

Repeatable

Once programmed, you can easily expand and repeat the same processing pipeline

Volume

You can process a large volume of data quickly (for instance the 28,000+ comments analyzed here)

unexpected ideas

Because the program is more agnostic of human assumptions, it can lead to unexpected conculsions

Easier to correct bias

While not free of bias, it can be easier to correct bias in an algorithm compared to changing the bias of a human being

Benefits of NLP

Comment Polarity

Comment Polarity captures how positive or negative a comment is

Polarity is calculated based off of movie ratings on a scale of -1 (super negative) to +1 (super positive)

Comment Topic

We can algorithmically create comment topics using LDA, which can be compared to see if people talking about a certain topic is a good or bad sign.

The algorithm came out with 6 topics listed below

Metrics of NLP

So what are the topics?

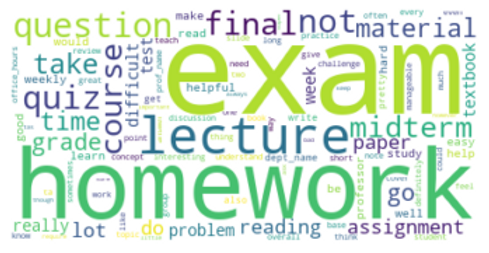

Coursework

The first topic consists of talking about the work associated with the course. This topic is dominated by words like exam, homework, material, and quiz.

Subject

The second topic is about the subject matter, words like course, learn, and the department or subject name (dept_name)

Professor - Personal

This topic is defined by the "prof_name" tag which means the comment mentioned the professor by name.

Professor - Formal

This topic is defined by refering to the professor and the course, but in a more general, more formal sense.

Dificulty

This topic is defined by talking about how difficult the course is. Importantly, this topic includes comments about the course being easy and hard.

Interest

This topic is defined by comments on how interesting or enjoyable the course is.

What can comments tell us?

Polarity aligns with course rating

As expected, the more positive the average comment on a course is, the higher the course rating is.

(R-squared = 0.58)

Remember that this is aggregated at the course level so the comment polarity is the mean polarity of all comments for a given course

The most common topic relates to course rating

-

When grouped by what comment topic was most common, some clear trends emerge

-

Mentioning the professor's name is the best option

-

Talking about the professor and the subject are also good

-

In comparison, if the comments focus on the difficulty of the course, that's a bad sign

-

So what does it all mean?

Requirement

Whether or not a class is required does not affect students interest in the class

When designing a major, think about what students need to learn and don't worry about making those classes required

Polarity

Comment polarity nicely correlates with course rating

Not surprising but confirms that comments and ratings align

Topics

Comments that mention a professor by name are the best, so try to establish a personal connection.

Similarly think about the difficulty of the course, too easy or too hard will lead to a lower course rating.

This project was completed as part of the Searle Teaching as Research program

Special thanks to Rob Linsenmeier and Lauren Woods for advising me during this project

All citations are linked above